Beads is a lightweight, graph-based issue tracker designed specifically for AI coding agents (like Claude, GPT-4, etc.) rather than human developers https://github.com/steveyegge/beads

Genkit Go 1.0 seems promising :

– Type-safe AI flows with Go structs and JSON schema validation

– Unified model interface supporting Google AI, Vertex AI, OpenAI, Ollama, and more

– Tool calling, RAG, and multimodal support

– Rich local development tools with a standalone CLI binary and Developer UI

– AI coding assistant integration via genkit init:ai-tools command for tools like the Gemini CLI

https://developers.googleblog.com/en/announcing-genkit-go-10-and-enhanced-ai-assisted-development/

London Food Map : take google maps data and show it without bias of any kind https://laurenleek.eu/food-map (Based on https://scikit-learn.org/stable/modules/generated/sklearn.ensemble.HistGradientBoostingRegressor.html )

Vibe-hacking : Disrupting the first reported AI-orchestrated cyber espionage campaign

https://www.anthropic.com/news/disrupting-AI-espionage

LLM agnostic coding assistant cli : https://opencode.ai/

Being non deterministic LLMs based AI Agents are un-testable (in sw engineering current terms) : the only criteria to evaluate anwsers is “LGTM” .. “A pragmatic guide to LLM evals for devs” https://newsletter.pragmaticengineer.com/p/evals

Arduino, new terms of service : https://www.reddit.com/r/arduino/comments/1p210nl/here_we_go_terms_of_service_update_from_qualcomm/

India gets its own GDPR like regulation DPDP : https://timesofindia.indiatimes.com/technology/tech-news/indias-first-full-fledged-privacy-law-goes-live-what-dpdp-rules-2025-mean-for-your-daily-apps/articleshow/125379900.cms

Cloudflare pingora crash https://hackaday.com/2025/11/20/how-one-uncaught-rust-exception-took-out-cloudflare/

Unveiling the Hidden World of Robot Vacuum Security https://dontvacuum.me/talks/CyberCon2023/AISA-cybercon-2023-dgiese-vacuum-robot.pdf

Jack Dorsey puts some chips on deVine (Vine reboot, nostr compatible, AI-generated content filter) https://devine.video/discovery

Climate TRACE : https://climatetrace.org/

Accurate.

PACESETTERS is a powerful alliance of 15 partners of diverse scope, scale and focus. The consortium draws on long-term experience, outstanding competences and specific expertise.

https://pacesetters.eu/about

“Notably, during Neo’s demo with the WSJ, the robot wasn’t performing any tasks autonomously. However, Børnich says Neo will perform “most household tasks autonomously” when it launches next year, noting that the quality of work “varies and will improve dramatically very rapidly as we acquire data.”

Neo Robot is cheating like all the other manufacturers right now.

https://www.roadtovr.com/helper-robot-neo-vr-telepresence/

“There’s more to software development than producing a working solution. Someone needs to safeguard design intent and maintainability. Maybe as LLMs democratize coding, existing developers need to evolve into architects who curate the structure of a codebase.” https://mo42.bearblog.dev/help-my-boss-started-programming-with-llms/

They might get to level-4 before tesla really does : https://gizmodo.com/nvidia-and-uber-say-theyre-building-a-100000-vehicle-robotaxi-network-2000677945

Qualcomm buys Arduino : https://arstechnica.com/gadgets/2025/10/arduino-retains-its-brand-and-mission-following-acquisition-by-qualcomm/ with open source hw and sw what is actually qualcomm buying ?? The ability to lock down the whole environment

uIP is a very small implementation of the TCP/IP stack https://github.com/adamdunkels/uip/tree/uip-0-9

Free and Open Source BIOS/UEFI boot firmware : https://libreboot.org/

AI Alignment : https://alignmentalignment.ai/caaac/blog/explainer-alignment

The last days of Social Media – Social media promised connection, but it has delivered exhaustion : https://www.noemamag.com/the-last-days-of-social-media/

DID-Nostr: After decades of platform lock-in, the first truly portable social graph standard has arrived https://dev.to/melvincarvalho/the-webs-missing-piece-how-did-nostr-quietly-solves-social-portability-1bg

“How can we trust what we see? Beyond form and content: trustability in the era of techno-images” (jaromil) : here

The Luciano Floridi conjecture : AI systems can either have great scope but no certainty or a constrained scope and great certainty https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5289884

roads diverge …

“Nvidia just got shut out of the Chinese market — this time by the Chinese government instead of the US.” https://techcrunch.com/2025/09/17/china-tells-its-tech-companies-they-cant-buy-ai-chips-from-nivida/

Rust contributor Nicholas Nethercote (the guy that made valgrind) looking for a new job : https://nnethercote.github.io/2025/07/18/looking-for-a-new-job.html

dyne compiled musl downloads : https://dyne.org/musl/

Curious to see where this goes.. Subliminal Learning : Language models transmit behavioral traits via hidden signals in data https://arxiv.org/abs/2507.14805

ai, another transformers revolution, MoR : https://www.alphaxiv.org/abs/2507.10524

qwen code, open source coding agent https://javascript.plainenglish.io/they-forked-gemini-cli-and-turned-it-into-a-monster-f420971eba09

The probability of a hash collision : https://kevingal.com/blog/collisions.html

Carbon : a successor language for C++ https://github.com/carbon-language/carbon-lang

Decentralize : https://decodeproject.eu/ ( https://dcentproject.eu/ )

Hardware memory models : https://research.swtch.com/hwmm

Who remembers UUCP ? NNCP : https://salsa.debian.org/jgoerzen/docker-nncpnet-mailnode/-/wikis/home

ProtectEU: A European Internal Security Strategy https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=celex:52025PC0148

Gödel’s theorem debunks the most important AI myth. AI will not be conscious | Roger Penrose (Nobel) https://www.youtube.com/watch?v=biUfMZ2dts8

Prompting AIs Will Turn Us into “Benevolent Dictators” ? https://paulborile.medium.com/prompting-ais-will-turn-us-into-benevolent-dictators-7a1ba270c0b2

Tuning go for ms to μs performance : https://renaldid.medium.com/from-milliseconds-to-microseconds-tuning-go-for-extreme-performance-6b1ce871f98f

Interesting analogy : https://intenseminimalism.com/2025/learning-and-leveraging-ai-as-interaction-material-in-your-product/

Lost in Linux kernel tuning ? explore all sysctl settings https://sysctl-explorer.net/

The term for this style of on-command software development is “vibe coding” — Andrej Karpathy, cofounder of OpenAI, coined it last month and it instantly caught on. The idea: Instead of developers writing literal lines of code, anyone can direct AI to build based on a prompt… and tweak from there. In Kaprathy’s words: “it’s not really coding — I just see stuff, say stuff, run stuff, and copy paste stuff, and it mostly works.”

https://blog.medium.com/a-definition-of-vibe-coding-or-how-ai-is-turning-everyone-into-a-software-developer-07346324b826

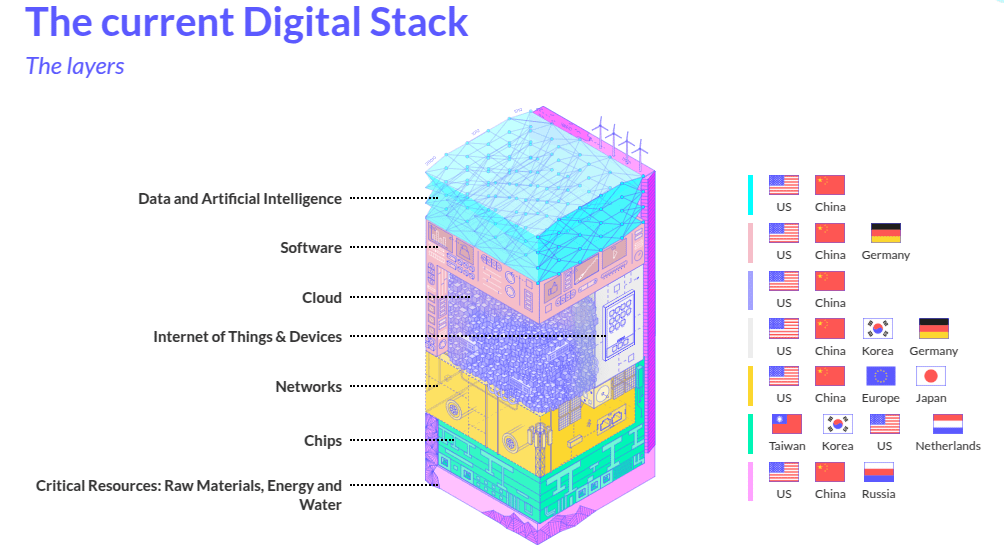

EuroStack – A European Alternative for Digital Sovereignty https://www.euro-stackreport.info/

Biggest sponsor of Go language is … Microsoft 🙂 https://devblogs.microsoft.com/typescript/typescript-native-port/

Porting Linux to Apple Silicon https://asahilinux.org/

Platform-independent low-level JIT compiler : https://github.com/zherczeg/sljit

Apollo mission audio/images in realtime (obviously we have never been to the moon, they did all this with photoshop in the 70s 🙂 ) https://apolloinrealtime.org/

Permacomputing : https://permacomputing.net/concepts/ get the idea

We are (Are we) destroying software (?) https://antirez.com/news/145

Relive the Apollo missions in realtime : https://apolloinrealtime.org/

The study that changed everything in ai/llm : google brain 2017 https://arxiv.org/abs/1706.03762v1

Exit cloud for a big service : https://world.hey.com/dhh/the-big-cloud-exit-faq-20274010

Earth-Sun Lagrange L2 point is getting crowded : gaia, euclid, webb and next one is https://en.wikipedia.org/wiki/Nancy_Grace_Roman_Space_Telescope

Testing non deterministic systems : https://medium.com/@sermineldek/testing-non-deterministic-behaviors-in-ai-systems-challenges-and-innovations-6e1996025504

Emissions fell by 4% in Q1 and 2.6% in Q2, while GDP grew by 0.3% and 1%, respectively, compared to the same quarters in 2023, according to the latest statistics. This demonstrates that climate action and economic growth can go hand in hand : https://ec.europa.eu/eurostat/en/web/products-eurostat-news/w/ddn-20241115-2